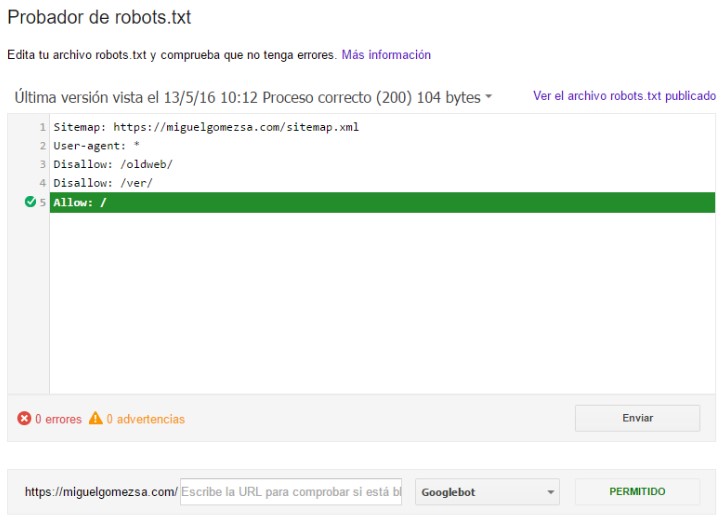

Robots txt no index12/10/2023  If the sitemap is listed in Google Search Console, then this information will be enough for Google. The sitemap stipulated in robots.txt allows search robots to indicate the address of the sitemap. Other search engines will select the first directive from the list. If the number of characters is the same, then the allow directive will be used, i.e., a limiting directive. Google and Bing search engines will prioritize the directive with more characters. For example, if you have mentioned:Īs you can see, the URL is allowed and prohibited for indexing at the same time. If you make a mistake in robots.txt, disallow and allow will conflict. As with the previous directive, always indicate the path after allow. Note: Google and Bing search engines support this directive. It is also possible to specify robots.txt to allow all the content: For example, you can allow search engines to crawl only one blog post: This allows robots to scan a specific page, even if it has been restricted. Note: Write the path after the directive, otherwise, robots will ignore it.

If it is for a specific crawler, then it will look like this: For example, if you need to hide a directory and all of its pages from the scanner for all systems, then the robots.txt file will look as follows: It allows you to close the access of search engines to content. This is the list of directives supported by Google: These are instructions for crawling and indexing of sites for search robots. It is important to indicate the exact names of search bots otherwise, the robots will not follow the specified rules. Then the system will execute all directives. An exception will be if you specify the same agent a couple of times. That is, the instructions for Google, for example, are not relevant for Yahoo or any other search engine. At the same time, each robot perceives only its own directives. In the document, it will look like this:Ī robots.txt document can contain a different number of rules for search agents. For example, let’s create a ban for all robots except for Bing. When creating a rule for all search engines, use this symbol: (*). Here is a list of the most popular search bots: User-agent and Main Directives User-agentĮach search engine has its own user-agents. Google also does not perceive the document as a strict directive, but it does consider it as a recommendation when crawling the page.

For example, parsers of e-mails and malicious robots. This can result in the search engine’s indexing of unwanted content.īut many SEO-specialists point out that some search engines ignore the instructions in the robot.txt file. When there are no directives in the file, or it is not created at all, the robot will continue crawling and indexing without taking into account the data on how the system should perform these actions. If the crawler finds a document, it first scans it, and after receiving instructions from it, it continues to crawl the page. After opening a site, the system looks for a robots.txt file.

To index the found content to show it to users for identical search queries.įor indexing, a search robot visits URLs from one site to another, crawling billions of links and web resources.To crawl the network for content detection.Let’s take a look at how robot.txt works. The document is placed in the root directory of the site. Robots.txt is a text file that informs search robots which of the files or pages are closed for crawling and indexing. The incorrect file structure is a common situation even among experienced SEO-optimizers, so we will dwell on common mistakes when editing robot.txt. It is the file that is responsible for blocking the indexing of pages and even of an entire site. In this article, we will tell you what robot.txt in SEO is, what it looks like, and how to create it correctly. Robots.txt : How to Create the Perfect File for SEO

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed